Cloud Migration at Full Speed - ProSiebenSat.1 Shows the Way

Successful Migration of the Bi Landscape to the Cloud

Initial Situation & Challenge

Due to significantly higher maintenance costs and increasing difficulty in keeping the BI landscape available, ProSiebenSat.1 decided to migrate the formerly on-premises environment to a cloud-based environment.

The intricacy of this migration project was due, among other things, to the magnitude and central importance of the BI landscape: More than 80 data sources converge at this point and result in about 20 data products which are used by many of the company's specialist departments. Some of these data products developed a "life of their own" in the course of time, thus further increasing the complexity of the overall system to be migrated. The seven migration teams (composed of about 80 employees from ProSiebenSat.1, b.telligent and other external service providers) had numerous inter-dependencies in addition to a tight schedule.

This resulted in the central challenge of the migration project: The new development environment was to enable teams to work quickly, flexibly and in parallel.

Solution

Tool Selection

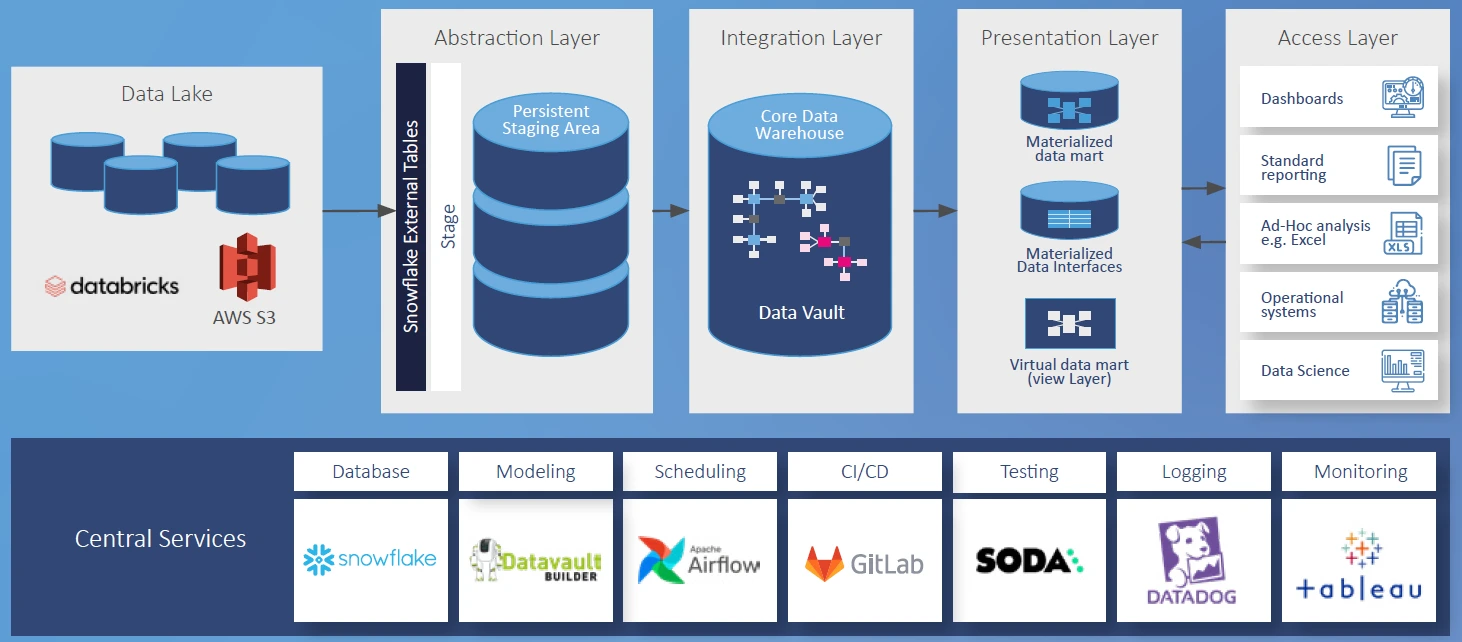

As part of the move to the cloud-based environment, all components of the BI value chain were represented by new tools:

Processes and architecture

The new cloud architecture basically consists of thelayers comprising abstraction, integration and presentation.

- The abstraction layer extracts newly delivered source data from Databricks via external tables in Snowflake and stores them in a persistent staging area.

- The integration layer makes the cleaned data available in a raw vault, including historization and versioning. A business vault is also available for business-oriented interpretations of the data.

- The presentation layer serves as the user interface for the specialist departments. Here, aggregations are fetched from the raw vault and business vault in accordance with the business view, and made available in data marts. This layer is also the access layer for the reporting applications.

Despite the resultant tool interface for the abstraction layer, ProSiebenSat.1 opted for a multi-vendor approach: Databricks for the data lake and Snowflake for the DWH.

Snowflake convinces as a DWH database with valuable features: Parallel loading of data sources and product ETL routes as well as simultaneous deployment and testing are trouble-free, thanks to transactional control and massive parallel processing (MPP). Connections to the data lake (via external tables) and to various reporting tools (via provided connectors) can also be easily implemented. The clear "star" among the Snowflake features for ProsiebenSat.1 is zero-copy cloning, which is used daily for the development process (a more detailed explanation of this feature follows in the next section).

Datavault Builder on Snowflake is used as the central DWH automation tool. With the help of process sequences (DAGs) configured in Airflow, ProSiebenSat.1 achieves very smooth and efficient orchestration from extraction from the data lake to presentation in the data marts.

CI/CD pipelines in Gitlab are used for deployments and daily testing with Soda. The logs produced by all components are collected and processed centrally using Datadog.

In a first project phase, this architecture was tested and fine-tuned by an early adopter team. Via a so-called hackathon, all other teams then began to simultaneously test the development in this environment, without any resultant bottlenecks or conflicts. For ProSiebenSat.1, this combination of tools and process design has proven to be optimal in meeting requirements for CI/CD, code versioning and testing.

Of particular note is the compatibility between Datavault Builder and Snowflake: The metadata generated by Datavault Builder are stored in Snowflake alongside business data, i.e. Datavault Builder does not use its own repository. Although Snowflake is a query-optimized database, it can easily handle the resultant micro-transactions (> 500k transactions per day). The true strength of this tool combination comes into play via sandboxing during the development process. Sandboxing is explained in more detail in the next chapter.

Agile Sandboxing With Datavault Builder and Snowflake

ProSiebenSat.1'sdevelopment philosophy has long focused on the use of modern technologies andprocesses: Developmentin sandboxes with consistent Scrum and CI/CD.

Created during the sandboxing process is an AWS instance equipped with Datavault Builder and Airflow as well as its own warehouse in Snowflake. A feature branch is created too. Snowflake copies the data states of a selected environment along with the metadata required by Datavault Builder. The Datavault Builder's architecture, meant to manage consistently without its own repository, is entirely suited to this.

With the introduction of Snowflake, this copying process can now be implemented much more efficiently. Decisive here is the zero-copy cloning feature. It allows exact replicas of databases to be created within a very short time, without copying and redundant storage of data.

The following diagram illustrates such a sandboxing and development process. The DEV, TEST, and PROD environments are similarly configured according to the architecture described in the last chapter (Snowflake database, Datavault Builder instance, and Airflow instance). The Git branch "master" contains the metadata and code on which the three environments are based. During creation of the development environment, a sandbox instance is generated and, according to the developer's choice, populated with the data from one of the three environments (PROD in this example). At the same time, a feature branch of "master" is created. Changes made to the sandbox in the development phase are synchronized in the feature branch. Once the development phase has been accomplished, the feature branch is merged into the "master", thus triggering a CI/CD pipeline which deploys the development to DEV. Once this has been done successfully, developers can also deploy to TEST and then to PROD.

This sandboxing concept enables developers to work on an exact reproduction of a static environment, including all data, and thus quickly test new developments with existent data. This not only improves the efficiency of the development and testing processes, but also increases the impact rate in terms of CI/CD and supports agile operations with Scrum.

Voices From the Project

b.telligent Services at a Glance

Cloud set-up & migration

Set-up of a completely new cloud data platform in AWS for the migration from Exasol to modern technologies such as Snowflake and Datavault Builder.

Snowflake DWH implementation

Introduction of Snowflake as a central data warehouse with zero-copy cloning, massive parallel processing (MPP) and dynamic resource management for better performance and cost control.

Datavault Builder & automated creation of the physical data model

Use of the Datavault Builder for automated DWH modelling including historization and metadata management without a separate repository.

CI/CD & testing infrastructure

Setting up a CI/CD workflow with GitLab pipelines to automate deployments and testing with Soda to ensure continuous quality.

Agile sandboxing & development

Establishment of a sandbox approach for parallel and independent development on copies of productive data environments - supported by Snowflake's zero-copy cloning.

Project management & team coordination

Management of seven migration teams with approx. 80 participants under tight deadlines - including establishing a common understanding of development and architecture.

.webp)

Results & Successes

Agile migration without disrupting operations: ProSiebenSat.1 migrated more than 80 data sources in parallel with day-to-day operations, thanks to a clear structure, agile methods, and strong teamwork.

Modern cloud architecture: Snowflake and Datavault Builder form the backbone of the new BI platform - powerful, flexible and perfectly orchestrated.

Efficiency & Cost Optimization: Over 50% cost savings through targeted use of resources and precise monitoring with query tagging.

Through selection and integration of suitable components, ProSiebenSat.1 succeeded in quickly making the entire target infrastructure for the new data platform available in the cloud. The combination of a sophisticated CI/CD process, valuable Snowflake features, and low-threshold integration of the remaining components and tools enabled establishment of a highly agile and efficient development process. As a result, especially in the first implementation phase, several product teams were able to start migrating their solutions largely independently and in parallel, while at the same time benefiting from each other's experience with the new technology and mode of operation.

Snowflake was particularly convincing as a DWH database and implicit repository for the Datavault Builder, especially with regard to the high requirements for reliability, performance and availability brought about by this combination. Linkage of the role concept and resource provision in Snowflake allows ProSiebenSat.1 to better control costs. The resource control provided by Snowflake has recently been made usable in the Datavault Builder too: One warehouse can be selected per job. This significantly optimizes performance and costs, as we were able to determine in a study. For this purpose, we calculated the costs per query and assigned them to the respective target objects - with respect to the loading routes, these are mainly DELETE, INSERT and MERGE statements. Our analysis spanned four weeks before and four weeks after the transition from a single warehouse to dedicated warehouses of different sizes. Taken into account are only objects which have been loaded on at least 75% of the days in both periods. The result indicates a cost reduction of over 50% and illustrates how important suitable warehouses are for the respective loading routes. Thanks to the query tags also integrated into DVB, we can determine exactly which query was executed by which job and whether the warehouse is suitable for it - or not. In addition to this improvement, intensive cooperation between ProSiebenSat.1 and b.telligent, as well as the partnership with Snowflake and Datavault Builder will result in further useful features and deeper integration of the two components.

Thanks to the stability and robustness of the employed components, there were no major failures or stops in development at any time. Thanks to ProSiebenSat.1's vibrant corporate culture, disciplined development philosophy as well as selection of modern tools with extensive documentation, excellent support and active partner management, this ambitious migration project has resulted in a future-proof BI platform.

The Tech Behind the Success

Amazon Web Services (AWS)

As an Advanced Partner of AWS, b.telligent supports its customers in the migration and setup of data platforms in the AWS cloud. More information here!

Datavault Builder

Datavault Builder's efficient visual data integration solution focuses on standardization and collaboration between business and IT staff. This allows you to increase productivity and benefit from the fastest possible time-to-insights, with full auditability and governance without additional effort.

Snowflake

b.telligent builds high-performance and scalable analytics solutions in the cloud with Snowflake. Learn more about our partnership now!

Download the Full Story

Want a handy PDF version of our success story? Whether you need it for yourself or to introduce the project to your team, download it now and explore the full success story. Enjoy reading!

Inspired?

Did our success stories spark your interest? If you're facing similar challenges in data, analytics and AI and look for expert support, let’s talk. A brief call can reveal how we can help you move forward.